Nvidia Jetson/RK3588/X86+FPGA+AI Embodied AI Robot Controller, Supports Large Models, Supports Real-time Kernel

On March 5, 2025, the Government Work Report reviewed by the Third Session of the 14th National People's Congress explicitly proposed establishing a growth mechanism for investment in future industries, fostering emerging industries such as biomaufacturing, quantum technology, 6G, and embodied AI. This policy signal demonstrates the nation's high regard for the development of the embodied AI industry, injecting strong momentum into its growth. The "Top Ten Trends in Humanoid Robots Outlook" released at the 2024 World Robot Conference pointed out that embodied AI refers to high-quality, high-performance intelligent systems capable of rapid and precise responses in highly dynamic environments. With continuous technological breakthroughs and the gradual improvement of the industrial ecosystem, embodied robots are accelerating their transition from laboratories to real-world application scenarios, becoming a crucial force in building a modern industrial system.

Driven by both policy and technology, the embodied robot industry is undergoing a critical transition from "laboratory exploration" to "scenario implementation," with the move towards L4-level intelligence becoming a key development direction for the industry. However, as robot intelligence levels transition from L3 (conditional autonomy) to L4 (high autonomy), a core contradiction becomes increasingly prominent—how can robots, like humans, possess both a "thinking" brain and an "executing" cerebellum?

Industry Pain Points

From L0 to L5: The Ultimate Challenge of Intelligent Transition

The autonomous capabilities of embodied robots are divided into six levels:

L0 (No Autonomy)

Can only achieve structural drive based on human commands, with no intelligent design, such as early industrial robotic arms;

L1 (Assisted Control)

Can drive joints to perform functions such as dragging, recording, and playback;

L2 (Partial Autonomy)

Plans motion trajectories and paths driven by algorithms to complete specific actions;

L3 (Conditional Autonomy)

Possesses perception capabilities, uses sensors to acquire environmental information, and can autonomously recognize, understand, and respond to pre-set actions, but still requires human supervision;

L4 (High Autonomy)

Possesses a certain level of cognition, capable of autonomous reasoning through observation, measurement, and pre-setting to complete tasks without frequent human intervention;

L5 (Full Autonomy)

Fully possesses human-like thinking and creativity, capable of autonomous judgment, decision-making, and executing complex tasks;

Currently, the embodied robot industry is in a critical transition phase from L3 to L4, with the core contradiction focusing on deep synergy between perception and decision-making: Robots need to process multi-modal data (vision, speech, point clouds) simultaneously and achieve millisecond-level motion control. Traditional single-domain controllers (such as pure AI computing power solutions or pure motion control solutions) struggle to balance computing power and real-time performance.

Technological Leap

From "Single Brain" to "Dual Brain": Core Architectural Innovation for Humanoid Robots

In L4-level intelligence, humanoid robots need to mimic the collaborative mechanism of the human "brain" and "cerebellum":

● "Brain": Responsible for natural interaction, intent understanding, hierarchical planning, and error reflection, relying on high computing power to support large model inference (e.g., parsing the user command "please tidy the desk" and breaking it down into sub-tasks);

● "Cerebellum": Responsible for full-body coordination, stable walking, skill decomposition, and dynamic error correction, requiring high real-time control (e.g., avoiding collisions when both arms collaborate to grasp an object).

This refers to the computing power demands for multi-modal perception and the real-time requirements for motion control. However, traditional solutions often fall short—pure AI computing platforms struggle to support high-precision motion, while real-time motion control systems lack environmental understanding capabilities. Therefore, how to enable robots, like humans, to possess both a "thinking" brain and an "executing" cerebellum has become a key topic of industry focus.

Sienovo's Answer

"Core Brain and Cerebellum" Controller Series

Based on the core "brain + cerebellum" requirements of embodied robots, Sienovo's series of controllers addresses industry bottlenecks one by one with a "perception brain + motion control cerebellum" dual-domain fusion architecture:

1

Performance: Balancing AI Inference and Real-time Motion Control

-

Brain (Jetson platform): Provides 275TOPS+ computing power, supports multi-modal data processing such as vision, speech, and point clouds, meeting the real-time inference needs of large models.

-

Cerebellum (X86 platform): Utilizes Intel mobile processors, optimizes the BIOS interrupt scheduling model, achieves 70-axis collaborative control, 1000Hz motion control cycle, and 35μs instruction jitter, solving the industry challenge of 200ms jitter in traditional EtherCAT networks.

2

High Reliability: Stable Operation in Extreme Environments

-

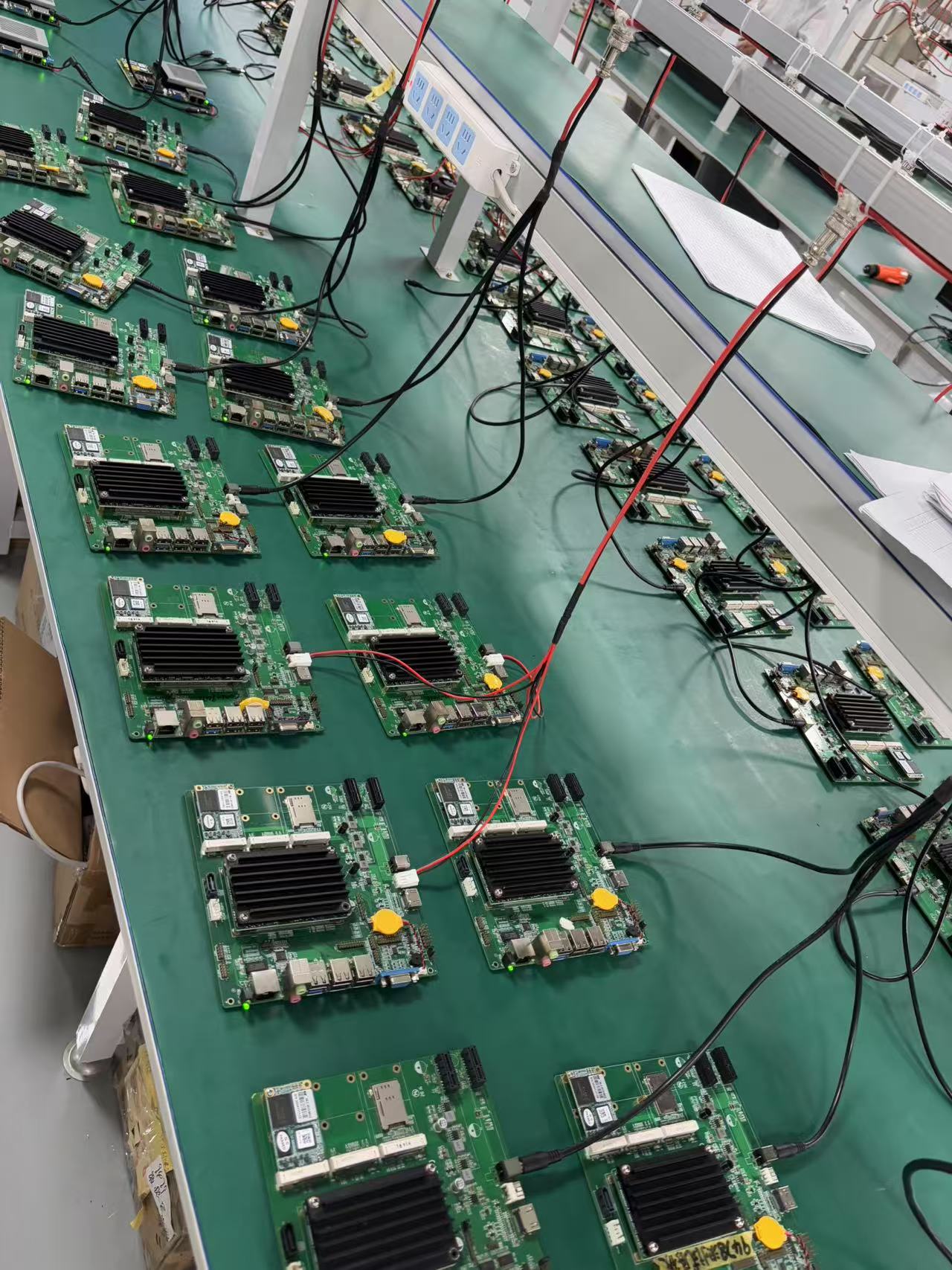

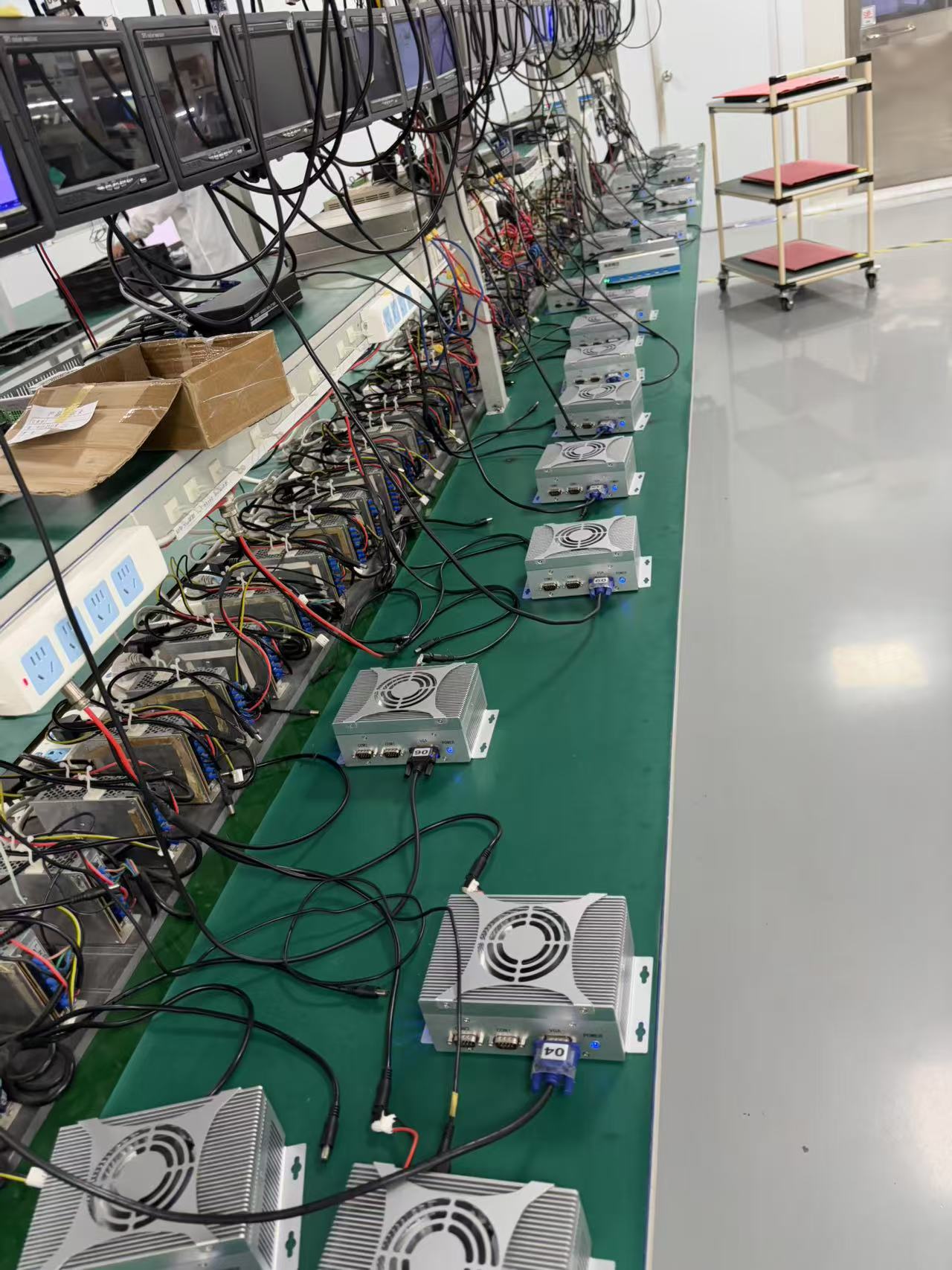

Automotive-grade testing system: Passed 23 categories and 1000+ tests, including functional tests, compatibility tests, environmental reliability tests, electromagnetic compatibility tests, safety tests, and regulatory compliance tests.

-

Three-proof design + intelligent cooling: Motherboard's three-proof coating resists corrosion and vibration, and the embedded cooling solution significantly reduces the overall footprint for equivalent performance, adapting to the compact structure of humanoid robots.

3

Multi-Scenario Applicability

-

Service robots: Such as home companion robots and hotel service robots, relying on AI computing power to achieve functions like speech recognition and facial recognition.

-

Industrial robots: Such as warehousing and logistics robots, requiring high-performance real-time control and path planning capabilities.

-

Special robots: Such as rescue robots and inspection robots, adapting to high reliability and wide temperature operating requirements in complex environments.

In the process of embodied robots transitioning from "tools" to "partners," Sienovo has redefined the technical boundaries of controllers. The "dual-brain collaborative" architecture not only resolves the core contradiction of L3 to L4 implementation but also, with its modular and highly reliable characteristics, drives robots from "industrial specialization" towards "consumer ubiquity."