3D Vision Application Cases: Guided Part Localization and Grasping

3D Guided Part Localization and Grasping

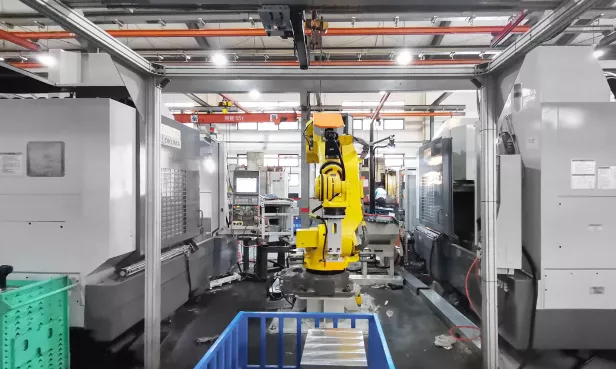

A Renowned Mold and Steel Group

Project Background

A renowned mold and steel group in Guangzhou required 3D-guided part localization and grasping, along with 2D identification information acquisition capabilities. The original setup, which used gantry cranes and manual handling, had an extremely low safety factor.

Workflow

• After the 3D camera visually identifies the product's position, a robot equipped with an electromagnet grasps the product.

• Before placing it onto the secondary positioning fixture, a laser and sensors inspect the product specifications. A 2D camera then identifies QR code labels to differentiate product materials.

Solution Highlights

• Vision Algorithm: For workpieces with extremely high reflectivity, which cause specular reflections in point clouds, a unique vision algorithm reduces the impact of reflections on recognition. Even when point cloud camera imaging is suboptimal, the system can still achieve grasping through algorithmic techniques.

• Point Cloud Clustering: Given a large variety of products with significant specification differences, point cloud clustering technology is used for any unknown incoming products. This addresses the customer's project challenges while reducing project costs.

• Integrated 3D Guided Grasping and 2D Recognition Workstation: To meet the customer's production needs, a workstation was designed and planned to integrate 3D guided grasping and 2D recognition. A new feature for 2D cameras to identify QR code labels on workpieces was added, significantly improving production line productivity.

• Gripper Design: The electromagnet design allows compatibility with various product models, handling products with significant thickness variations and excessive weight. This excellent gripper design is perfectly suited for the task.

Disordered Picking of Round Bars from Deep Bins

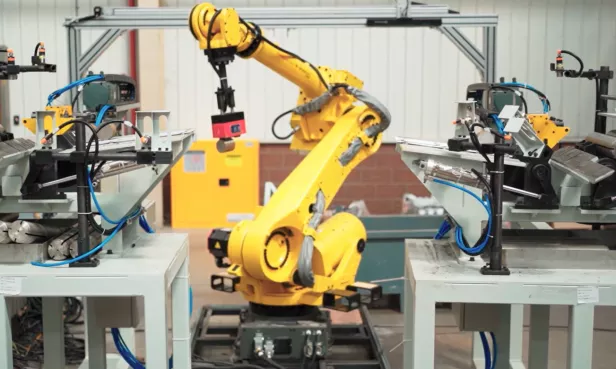

A Large Automotive Parts Factory

Project Background

The client is a renowned foreign-funded automotive parts enterprise. Their factory in Northwest China required 3D vision to automate the feeding of cylindrical rods. The round bars were placed randomly in deep bins, had reflective surfaces, and varied in specifications. They needed to be placed in a specific orientation for subsequent downstream processes.

Workflow

• Manually transport bins containing round bars to the robot's workstation, then visually locate the bin's position.

• Visually identify the pose of the round bars, guiding the robot to perform collision-free grasping.

• The robot places the round bars into a chute for secondary positioning, then grasps them again for precise feeding.

• Repeat the above actions until the bin is empty.

Solution Highlights

• Utilizes an XM-GX-L structured light camera, equipped with a sliding module, capable of high-precision point cloud reconstruction for stacked round bars in dual workstations.

• Strong visual recognition capabilities, automatically calculates collision-free trajectories, solving challenges such as vertically placed round bars and corner grasping, achieving efficient bin emptying.

• Adapts to non-standard rectangular bins, ensuring stable and collision-free grasping.

• Highly compatible gripper, adaptable to various specifications of round bars, enabling flexible picking.

Caliper Loading and Unloading

A Large Automotive Parts Factory

Project Background

The client is a large automotive parts enterprise in East China, requiring 3D vision to automate the loading and unloading of caliper housings. The caliper housings are irregularly shaped castings, interlocked face-to-face and stacked layer by layer in deep bins. The front and back sides of the workpieces have high similarity and are prone to interlocking. Workpieces placed upside down need to be identified and placed onto a flipping fixture. Both the workpieces and trays exhibit a certain degree of tilt. After processing, the surface of the caliper housings may have residual cutting fluid, requiring them to be re-stacked layer by layer into bins for unloading.

Workflow

• Once the bin is in position, vision identifies the caliper's pose and distinguishes its front/back orientation, guiding the robot to grasp and place it onto the corresponding fixture.

• After each layer is cleared, vision guides the quick-change gripper to pick up the tray, proceeding with the next layer's loading until the bin is empty.

• Once workpiece processing is complete, vision guides the robot to place the tray. After a quick gripper change, it then guides the robot to unload workpieces from the designated grid slots.

• After each layer is unloaded, the actions of placing a new tray and unloading are repeated until completion.

Solution Highlights

• For loading, the XM-GX-L camera is used, mounted on a mobile module, capable of high-precision 3D pose recognition for bins, tilted calipers, and black trays.

• For unloading, the XM-SP-L camera is used, mounted on a mobile module, capable of ±3mm precise recognition of bin and placement slot positions.

• Automatically calculates collision-free trajectories, solving the challenge of grasping workpieces in corners.

• Quick-change gripper design, compatible with grasping various specifications of workpieces and trays.

Flywheel Housing Loading and Unloading

A Large Automotive Parts Factory

Project Background

The client is a large automotive parts manufacturing and assembly vendor in Southwest China, requiring 3D vision to assist grinding machine operations, achieving automatic grasping for loading and precise positioning for unloading. Incoming materials are aluminum alloy castings, stacked layer by layer in deep bins, with complex structures and a certain degree of tilt. After grasping, precise laser marking is required downstream, thus demanding high visual pose accuracy.

Workflow

• After manually placing the bin in position, vision captures an image to identify the bin and workpiece poses, guiding the robot to perform bin picking.

• Vision performs secondary precise positioning of the workpiece pose, facilitating laser marking, and then places the workpiece into the machine tool for a series of processes including grinding, cleaning, flipping, and drying.

• After each layer of workpieces is cleared, vision guides the grasping of the separator plate, continuing with the loading of the next layer until the bin is completely empty.

• Once workpiece processing is complete, the robot retrieves the workpiece. Vision then determines the placement pose, guiding the robot to complete the unloading.

Solution Highlights

• Utilizes an XM-SP-L large field-of-view camera, capable of recognizing and locating workpieces, bins, and separator plates, achieving stable grasping and 100% bin emptying.

• Secondary precise visual positioning, with an accuracy of ±1mm, meeting marking requirements.

• Vision captures images of the finished workpiece unloading area, determines the placement pose, and guides collision-free and accurate unloading.